The Babylon Effect

On what hyper-personalization costs us — and what designers should be protecting

We have a funny yet timeless idiom in Turkish — “leb demeden leblebiyi anlamak” — literally, understanding “leblebi” before you even finish saying “leb.” It’s what you say about someone wise enough to finish your thought before you’ve had it.

Lately, I keep having a lot of leb… moments. My music app senses my mood before I’ve named it. My email client drafts messages that sound like me. My AI assistant finishes sentences I wasn’t sure how to end. These tools have started to feel less like tools and more like extensions of myself. This sometimes feels uncanny.

We’re moving into an age of hyper-personalization — where everything we see, read, and do gets recorded, fed back through the AI model, and gradually shapes how we see the world. I don’t think most of us have really sat with what that means yet.

There’s another dimension to this that I find even more interesting. Beyond the algorithmic side, people are now building and remixing their own digital tools — “vibe coding” experiences that reflect their personality, workflow, even their mood. That’s a different kind of personalization. For instance, a parent created a baseball app just to track his own kids’ schedules and game stats. Another friend created an app to pick up her clothes in the morning. These are self-driven use cases that I find fascinating. You’re not just being adapted to; you’re actively shaping your own digital context with your AI teammate.

Together, these — algorithmic personalization and hyper-personalized apps — are creating something I hadn’t quite anticipated: fragmentation at scale.

At the Swell conference in Hawai’i last year, a startup called New Generation demonstrated an AI that generates a different website for every visitor. You ask a question and the whole page changes — the layout, the content, the tone — in real time, just for you. This is not just personalization in the old sense, but also an AI building a highly tailored reality for you.

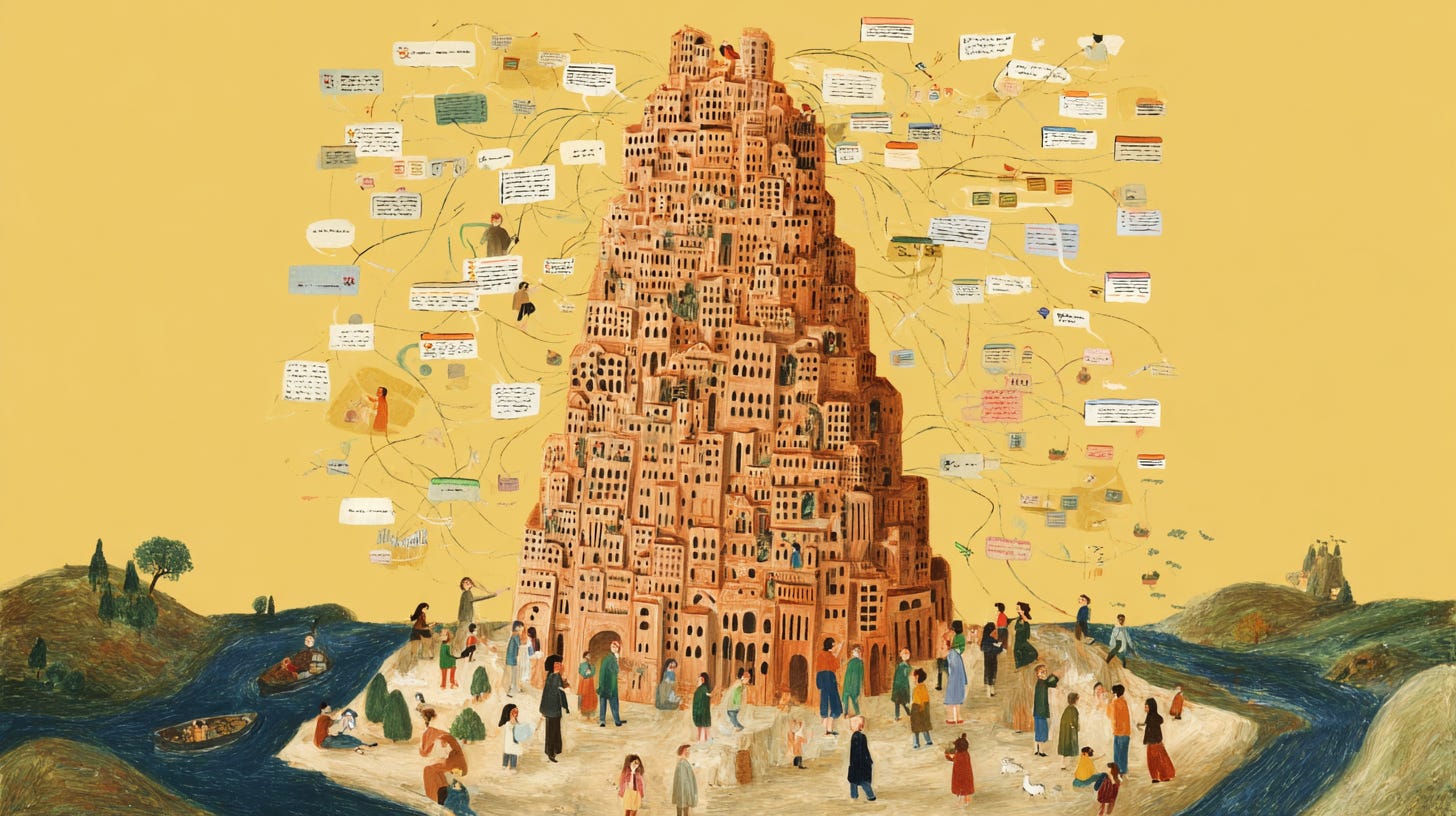

When I saw that demo, I couldn’t stop thinking about what it means for us as designers. At some point, I had an epiphany: this felt like a modern version of the Tower of Babel story.

In this biblical story, people are building toward the sky together — until their language splinters. No one can understand each other anymore. The project stops. They scatter.

Something structurally similar may be happening in digital products right now.

I call it the Babylon Effect: millions of small, AI-built personal realities, each one different, each one optimized for one person. You and I can visit the same product and see different layouts, read different copy, receive different framings of what the product even is. Right now, we already live in filter bubbles shaped by social media.

The next version is more thorough — your agent knows your writing style, your preferences, and your decision patterns. The upside of context-awareness is obvious. The downside is harder to see: when everything is tailored, being understood becomes a form of isolation. Your context becomes illegible to anyone who doesn’t share it. You might have an agent who can speak for you perfectly — to other agents. But what about the other people?

I’ve started observing a quieter version of this in design critiques. Two designers, both using AI tools daily, both serious about their craft — but when they review each other’s work, something is off. They can feel a disagreement but can’t quite locate it. Their standards have quietly diverged. Each has spent months in a feedback loop with a model that learned their preferences — which prompts they use, which outputs they accept, where they push back. The model didn’t change. But their version of it did. Now one person’s “good enough” is the other’s “obviously AI.” They don’t have a shared craft vocabulary anymore. And because they were only ever comparing outputs, never the process, they didn’t see it coming.

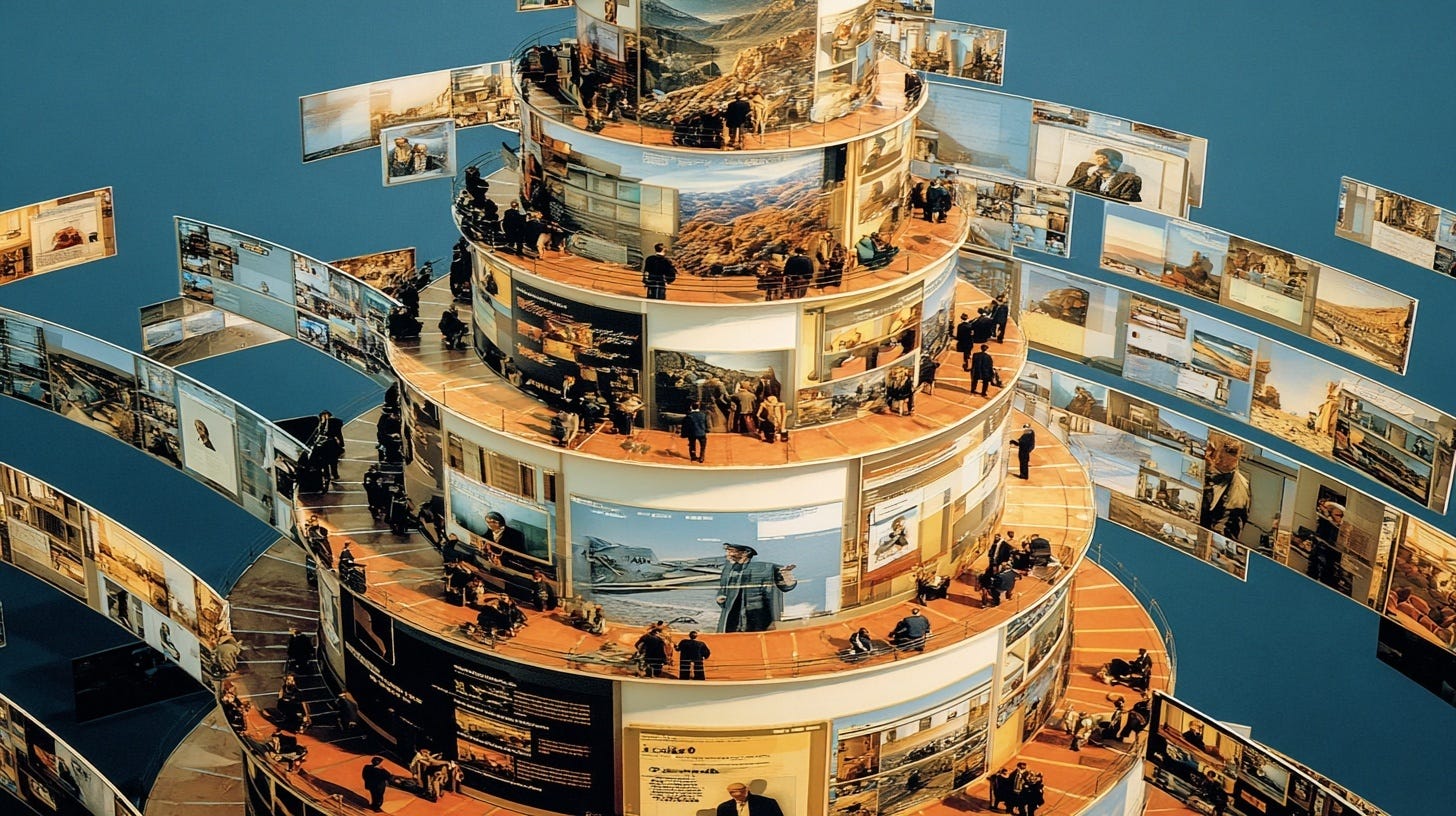

The piece that’s missing from most personalization is something obvious: the commons. Not a homepage. Not a shared dashboard. The commons is a layer where people can see what others are doing, even while their own experience stays personal. It’s the path you share with others and contribute to.

I think we already have early prototypes of this. Perplexity’s Discover page is one — a public map of what people are curious about. Notion’s template gallery is another — not your workspace, but a space for remixing what others made. MidJourney’s community feed. Sora’s video showcase. These aren’t accidental add-ons. There are designers and product teams instinctively reaching for the commons to counterbalance the pull toward isolation.

There’s a version of this yearning you can see in the wild already — the GitHub repos, the prompt libraries, the Notion templates people share constantly. Some of it is performance, a way of signaling you’re still on the wave. But underneath that, there’s a genuine impulse: here’s what I figured out, build on it. The problem is that the infrastructure isn’t quite there yet. People are reaching for commons and broadcasting instead.

What I find interesting is that these all look a lot like what I study in organizational culture: shared rituals that make a community accessible to itself. A ritual doesn’t erase individuality — it holds it. It’s the shared beginning that lets everyone know they’re in the same place, even if they arrived from different directions. In product design, we have very few equivalents of this idea.

Most product teams treat the commons as a growth feature — virality, social proof, network effects. That’s fine, but it undersells the function. The real job of shared spaces in a hyper-personalized product is cultural coherence. It helps users share a common understanding of what this product is, who uses it, and what it’s for.

A few things I think matter more than the standard advice here:

Shared first steps, not shared everything. You don’t need to freeze the whole experience — just the entry point. When everyone begins the same way, they share a common reference point. A new user who goes through a universal “Week 1” ritual has something to talk about with someone who did it a year ago. That’s worth designing intentionally, not letting the algorithm skip past it.

Let the AI show its rationale. When personalization is invisible, people can’t interrogate it or talk about it. But when you surface why a recommendation is appearing, what signals the system is using, users gain a shared vocabulary for the product’s logic. That’s not just a trust feature. It’s a language. Two users who both understand how the system thinks can compare notes. Without it, they’re just comparing outcomes and are confused about the difference.

Build for agent interoperability. This one is further out, but I think it is underestimated. Right now, AI agents are becoming personal context silos — they know your preferences, your history, your style. If those agents can’t share relevant context with each other (with appropriate consent and control), we’ll end up with efficient individual tools that make collaboration harder, not easier. The design challenge isn’t only the agent-user interface, but also the agent-agent interface, and how humans stay in the loop across it.

Culture requires authorship, not just sharing. Perplexity’s Discover page shows me what others have searched for. That’s fine. But Notion’s templates go a step further — they show me what someone made. That’s qualitatively different. When users can build on each other’s work, personalization stops being just a feed and starts becoming a culture. The design question is: what can users make in your product that’s worth passing on?

There’s a version of hyper-personalization that ends up feeling like being very well served in a room alone. Every preference of the user is met, no friction, nothing surprising. I don’t think that’s what most people actually want, that isolated experience, even if it’s what engagement metrics optimize toward.

The challenge over the next few years is balancing personalization with the idea of the commons. Which paths stay personalized? Which parts should stay part of the commons? And honestly, most product teams in this frenetic AI race aren’t asking this question.

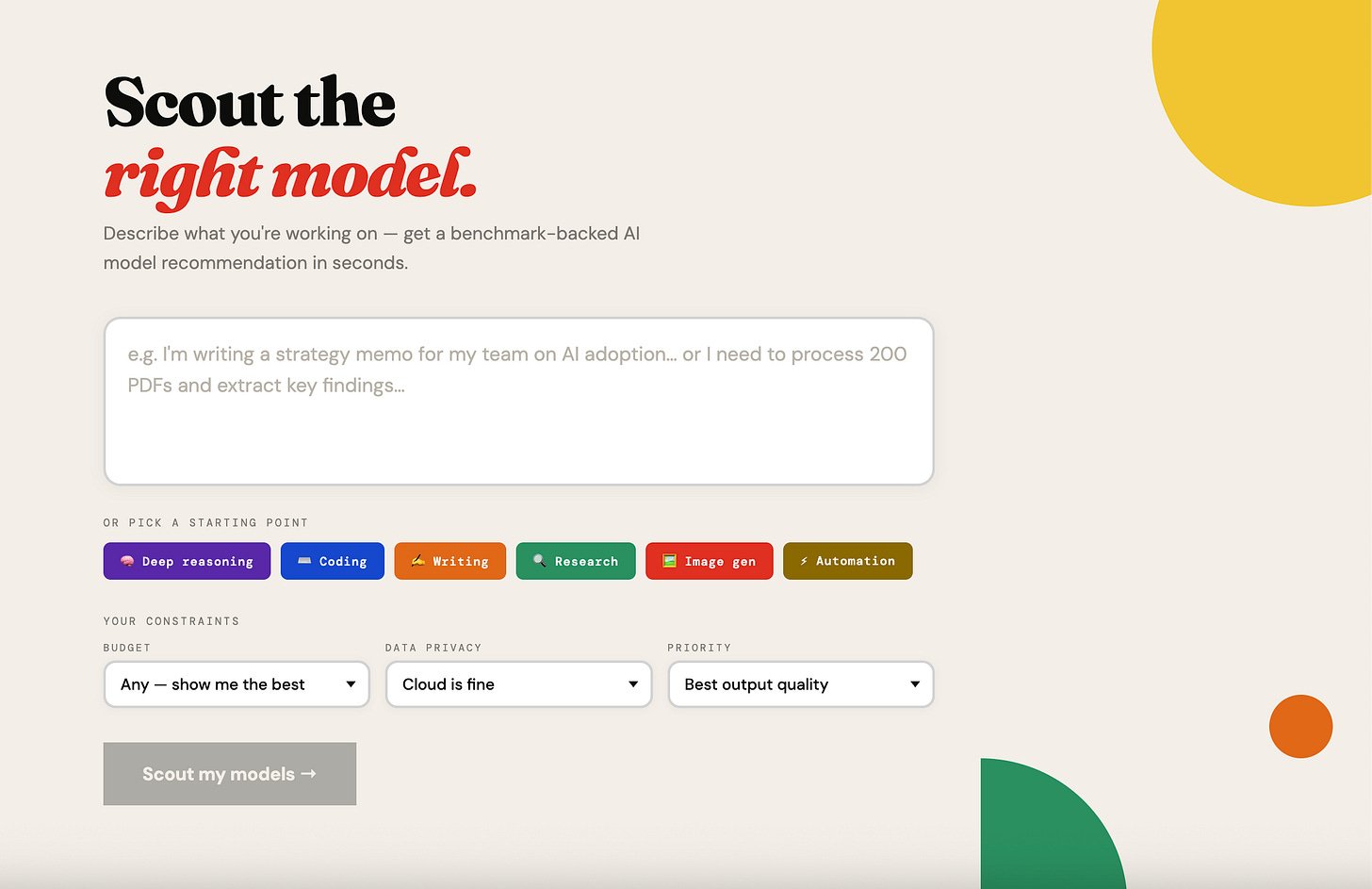

What I am tinkering with

I’ve shared this earlier as a note, wanted to add here as well. I’ve built a simple Model Scout tool to help you decide which model to use for what. Give it a try and let me know what you think.

Until next time, take good care of yourself and your loved ones.

I'm a priest in the digital world, and I often think about the Tower of Babel in just the ways you are talking about.

It's so meaningful to me as a person of faith - who loves and utilizes AI.

I believe that all humans have in common being made in the image of God. Our souls know one another, and no matter how individualized we get, we have a connection that cannot be broken.

Religion - and religious practice - makes this manifest.

It's not just ritual - it's ritual tied to meaning. Tied to our orientation to and dependence upon God.

I think it's more important now than ever.

Hyper-personalization is amazing, but without shared experiences, we risk everyone living in their own isolated digital bubble